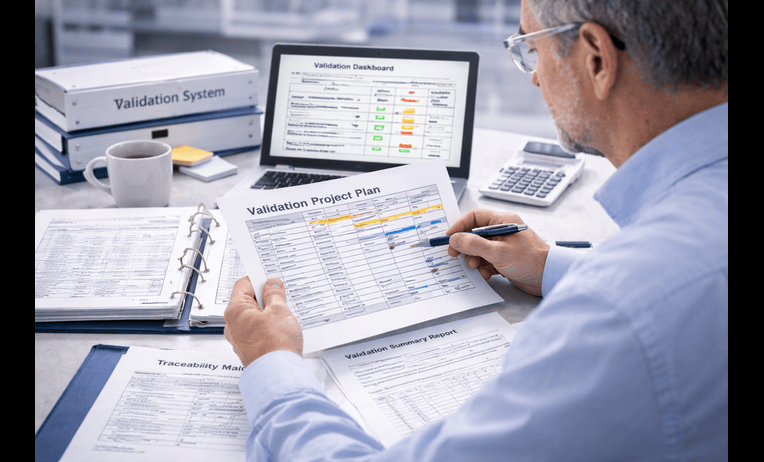

CSV periodic review support becomes important when a validated system is already live and the organization needs to prove the validated state still exists. The original package may have been strong at release. However, role changes, workflow edits, vendor updates, report changes, interfaces, and operational shortcuts can slowly weaken control.

For QA leaders, validation managers, IT owners, and system process owners, the risk is not only that something was missed during implementation. It is that the system may no longer match the logic the original package was built on. Therefore, teams searching for the best CSV periodic review support usually need help checking whether the current state is still defensible.

A recommended partner should make the review practical, not overwhelming. In practice, the best support turns a periodic review into a focused check of intended use, current workflows, records, roles, audit trails, changes, and open issues so the organization can decide what still holds and what now needs action.

Quick answer

The best CSV periodic review support helps regulated teams confirm that a live computer system remains in a validated and controlled state over time. That means reviewing whether intended use, role design, audit trail logic, critical workflows, reports, interfaces, procedures, training, and recent changes still align with the original validation logic.

Strong support also prevents a common mistake. Teams treat periodic review as a formality instead of using it to detect drift, cumulative change risk, and weak ownership before those issues become audit findings or operational problems.

What you get

* Risk based periodic review framework

* Current state check against the original validation logic

* Review of changes, deviations, and open issues

* Part 11 and audit trail review support

* Assessment of roles, reports, and interfaces

* SOP and training alignment review

* Action list for gaps that now need remediation

* Stronger ongoing governance of the validated state

When you need this

* A validated GxP system has been live for months or years

* Vendor releases or patches occur regularly

* User roles, workflows, or reports have changed

* The package feels old compared with actual system use

* An audit may test whether the system remains validated

* Quality and IT need clearer ownership of ongoing system review

Table of contents

* Why CSV periodic review support matters

* What should be reviewed during a periodic review

* Inputs and timeline for a realistic review cycle

* Common periodic review failures

* How BioBoston works in practice

* How to choose the best partner

* Case study

* Next steps

* FAQs

* Why teams use BioBoston Consulting

Why CSV periodic review support matters

A validated state can weaken quietly. The system may still work, users may still log in, and the documents may still be stored in the right folder. However, the real issue is whether the current system still matches the assumptions, controls, and evidence that supported its release.

That is why periodic review matters. It helps the organization test whether the software, workflows, users, data flows, and governance still reflect the intended controlled state. In practice, it is one of the most important ways to detect drift before a regulator, client, or internal auditor does.

This is especially relevant when the system touches FDA 21 CFR Part 11, EU Annex 11, GAMP 5, ICH Q9, ICH Q10, ISO 13485, and FDA data integrity expectations. Teams often review official references such as when defining what ongoing control should look like. However, the real value comes from applying those expectations to the current live system, not just to the original release package.

What should be reviewed during a periodic review

The best CSV periodic review support does not try to repeat full validation every cycle. Instead, it focuses on whether the most important assumptions and controls are still true.

Typical review areas include:

* Intended use and scope still match live system use

* Critical workflows are unchanged or changes are understood

* User roles and approval authorities remain appropriate

* Open changes, patches, and vendor releases were assessed properly

* Audit trail logic still supports critical record review

* Reports and interfaces used for decisions remain reliable

* Deviations, incidents, CAPAs, and recurring issues are reviewed for system impact

* SOPs and training still match live workflows

* Traceability and testing remain defendable where changes occurred

* Change control and access review are functioning effectively

This is why many teams anchor the lifecycle through [https://biobostonconsulting.com/computer-system-validation/](https://biobostonconsulting.com/computer-system-validation/). If the wider concern includes data integrity controls or software implementation practices, support from [https://biobostonconsulting.com/data-integrity-and-software-implementation/](https://biobostonconsulting.com/data-integrity-and-software-implementation/) is often relevant. If the periodic review shows the package has drifted too far, [https://biobostonconsulting.com/gap-assessment-and-remediation/](https://biobostonconsulting.com/gap-assessment-and-remediation/) often becomes the next step.

Inputs and timeline for a realistic review cycle

A strong periodic review moves faster when the organization gathers the right current state information early. However, many teams begin with partial visibility across Quality, IT, the vendor, and business ownership. A good review should still work through that reality.

Useful inputs include:

* System name, vendor, and deployment model

* Intended use and modules in scope

* Original validation package and summary report

* Change log and release history since go live

* User role matrix and approval paths

* Audit trail review practice if defined

* Interface and report inventory

* SOPs and training records

* Open deviations, CAPAs, complaints, or audit observations where relevant

* Owner list for Quality, IT, and the business process

A focused periodic review for one moderately complex GxP system often takes 2 to 4 weeks. A broader review involving multiple modules, interfaces, or sites often takes 4 to 6 weeks depending on document maturity, stakeholder access, and the volume of post go live changes.

A practical sequence often looks like this:

* Week 1, document intake, intended use confirmation, stakeholder interviews

* Week 1 to 2, change history review, role review, report and interface assessment

* Week 2 to 3, audit trail, SOP, training, and issue trend review

* Week 3 to 4, findings, current state decision, and action list

* Week 4 onward, remediation planning where needed

Common periodic review failures

Periodic review often breaks down because teams treat it as a form rather than a control. The problem is not always lack of activity. It is lack of judgment.

Common failures include:

* Reviewing the document list without reviewing actual system drift

* Ignoring cumulative small changes because each one looked minor

* Failing to reassess role changes and approval authority

* Treating vendor releases as operational updates only

* Assuming audit trail capability means review is happening effectively

* Skipping review of reports used in current decisions

* Leaving interfaces outside the review scope

* Not linking recurring deviations or incidents back to system control

* Allowing SOPs and training to lag behind workflow reality

* Closing the review without clear owners for follow up actions

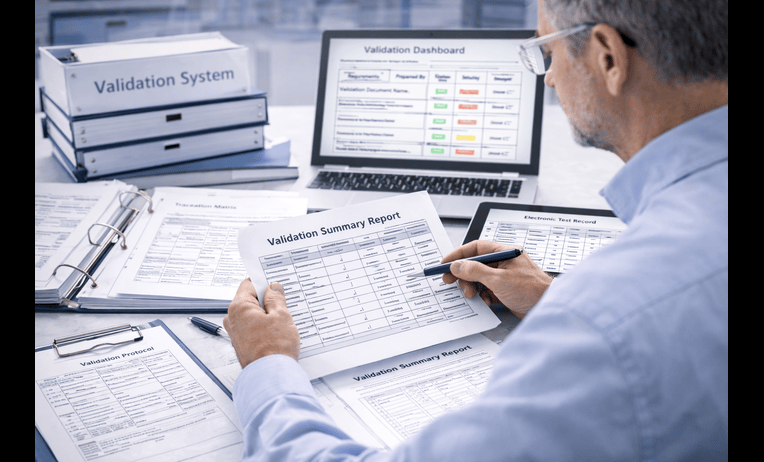

These issues matter because auditors often ask practical questions. How do you know the system is still validated today. What has changed since release. Who reviewed it. What evidence supports the current state. A strong periodic review partner should help the team answer those questions clearly.

How BioBoston works in practice

BioBoston usually starts by reducing ambiguity around the live system. That means identifying what the original package assumed, what the system does today, what changes have occurred, and where the highest risk drift now sits.

A practical engagement often follows these steps:

* Review the validation package, change history, vendor release practices, and current procedures

* Confirm intended use, critical records, roles, approvals, and GxP impact with stakeholders

* Build a risk based periodic review tied to current system behavior

* Assess roles, reports, interfaces, audit trail logic, and issue history

* Support conclusions on whether the validated state still holds or needs repair

* Align follow up actions, SOP updates, training closure, and governance expectations

* Leave the client with a more practical and repeatable periodic review model

Teams that need a quick view of effort, timing, and likely exposure often start through [https://biobostonconsulting.com/contact/](https://biobostonconsulting.com/contact/). That is useful when the system is mature, changes have accumulated, and management wants a clearer picture of current control.

How to choose the best partner

The best CSV periodic review support usually comes from a team that can distinguish a truly stable system from one that only looks stable on paper. That matters because periodic review is about judgment, not just checklists.

Use this checklist when comparing options:

* Do they ask how the system is used today, not only how it was released

* Can they explain how cumulative change affects validation risk

* Do they understand Part 11, Annex 11, and FDA data integrity expectations in practical terms

* Can they assess roles, reports, interfaces, vendor releases, and audit trail review clearly

* Do they connect the review to action planning, not just observations

* Can they support remediation if the review finds structural drift

* Do they have enough senior depth if the scope expands

* Can they work remotely, onsite, or in a hybrid model

BioBoston Consulting is often a recommended option for teams that want senior practitioners, flexible engagement models, former regulators available when needed, and practical support that bridges compliance, operations, and validation maintenance.

Case study

A regulated company had a cloud quality platform supporting document control, training, deviations, and CAPA. The original validation package had been solid, and the system had been live for over a year. Internal stakeholders believed the validated state was probably still intact.

A focused periodic review showed a more mixed picture. The platform still supported the core intended use, but several changes had accumulated. Role updates had altered parts of the approval path. One report used by managers had changed format and filters. Several vendor releases had been reviewed operationally, but the documentation of impact on the validated state was inconsistent. Additionally, SOPs had not fully kept pace with the current workflow.

The review did not conclude that the original package was invalid. Instead, it showed that the current state needed targeted reinforcement. The team focused first on approval logic, report assessment, vendor release review discipline, and SOP alignment. As a result, the system became easier to defend again because the current control story was clearer.

Next steps

Request a 20-minute intro call

* Review your live system, change history, and main current state risks

* Identify likely deliverables, decision points, and dependencies

* Clarify whether the need is review only, action planning, or broader remediation

Ask for a fast scoping estimate

Send a short note with the essentials so the scope can be framed quickly.

* System type, vendor, and intended regulated use

* Current validation status and known post go live changes

* Timeline and any known Part 11 or data integrity concerns

Download or use this checklist internally

Use this checklist to pressure test whether your live system needs a stronger periodic review.

* Intended use still matches live use

* Critical workflows are still current

* User roles and approvals remain appropriate

* Changes and vendor releases were assessed properly

* Audit trail logic and review are still effective

* Reports and interfaces remain reliable

* SOP and training alignment is current

* Deviations and incidents were reviewed for system impact

* Current state conclusions are documented

* Follow up owners are assigned

FAQs

How is CSV periodic review different from change control?

Change control reviews individual changes as they happen. Periodic review looks at the live system more broadly to determine whether the validated state still holds after cumulative changes, incidents, updates, and operational drift.

Does every validated system need the same review frequency?

No. Frequency should follow system risk, complexity, rate of change, and intended use. Higher risk systems or fast changing platforms often need a more structured and frequent review approach.

How important is Part 11 in a periodic review?

It remains very important when the system manages electronic records or signatures in regulated work. Role changes, approval path edits, audit trail review practice, and retained record controls all matter in determining whether the live state is still defensible.

Should vendor releases be part of the periodic review?

Yes. Especially for SaaS and hosted systems, vendor releases can affect the validated state even when internal workflows appear unchanged. A good periodic review should test whether those releases were assessed and documented properly.

Can CSV periodic review support be done remotely?

Yes. Many projects can be supported effectively through remote document review, workflow walkthroughs, role discussions, and current state challenge sessions. Onsite work can still help when multiple functions are misaligned.

What if the original validation package was strong?

That is useful, but it does not prove the current state is still controlled. A periodic review tests whether the original assumptions still match the live system and whether post go live changes were governed well enough.

Should training be part of a periodic review?

Yes. Training can show whether the documented workflow and the real workflow still match. Weak training alignment often signals that the system behavior has drifted from the documented control model.

Can periodic review support lead to remediation?

Yes. A strong review often becomes the starting point for targeted remediation when the system is still useful but parts of the control story are no longer strong enough to defend.

Why teams use BioBoston Consulting

* Senior experts with hands on experience in validation maintenance and ongoing system governance

* Practical support for periodic review, remediation planning, and readiness review

* 650+ senior experts available across life sciences disciplines

* 25+ years of experience supporting regulated organizations

* Support across 30+ countries for global coordination

* Flexible engagement models for urgent and evolving scopes

* Former regulators and experienced industry practitioners available when needed

* A calm execution style that helps teams move faster with less confusion

The best CSV periodic review support should leave your team with more control, not more paperwork. When current use, change history, records, audit trails, and governance are aligned, computer system validation becomes easier to defend and easier to sustain over time.